Automated changes by [create-pull-request](https://github.com/peter-evans/create-pull-request) GitHub action Signed-off-by: Github Actions <133988544+victoriametrics-bot@users.noreply.github.com> Co-authored-by: AndrewChubatiuk <3162380+AndrewChubatiuk@users.noreply.github.com> Co-authored-by: Hui Wang <haley@victoriametrics.com>

82 KiB

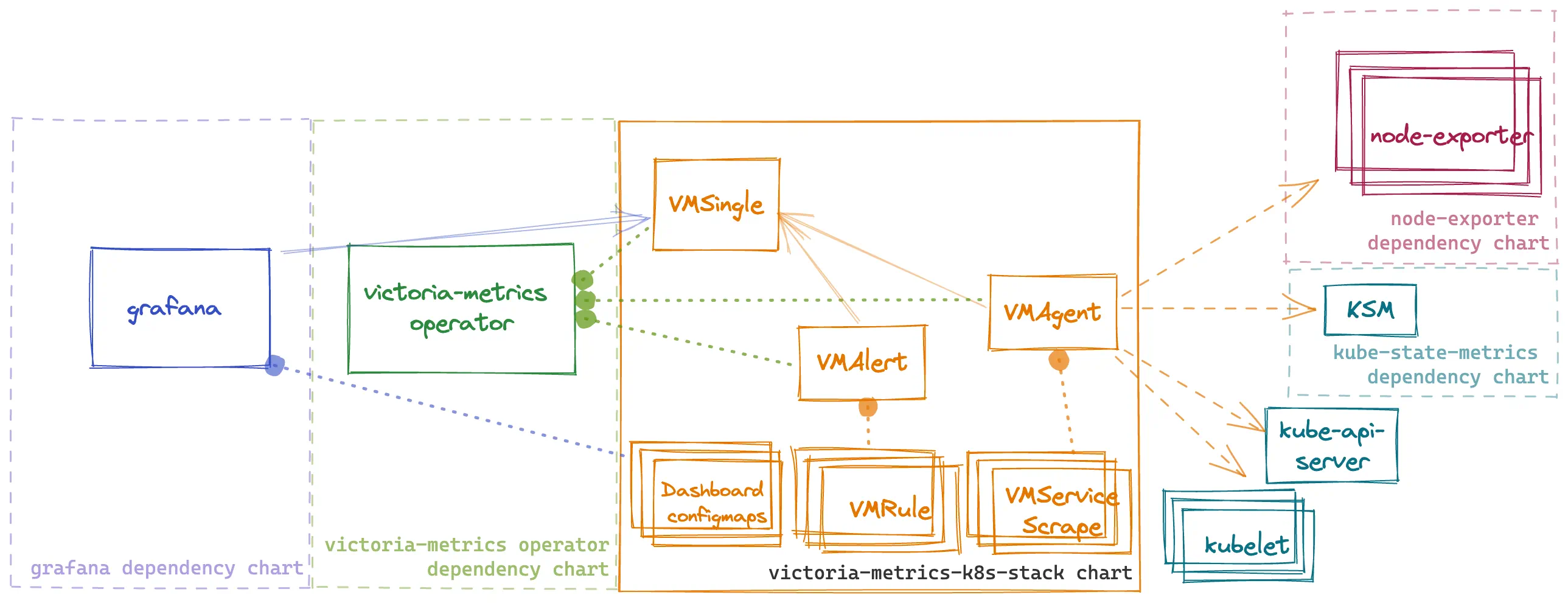

Kubernetes monitoring on VictoriaMetrics stack. Includes VictoriaMetrics Operator, Grafana dashboards, ServiceScrapes and VMRules

- Overview

- Configuration

- Prerequisites

- Dependencies

- Quick Start

- Uninstall

- Version Upgrade

- Troubleshooting

- Values

Overview

This chart is an All-in-one solution to start monitoring kubernetes cluster. It installs multiple dependency charts like grafana, node-exporter, kube-state-metrics and victoria-metrics-operator. Also it installs Custom Resources like VMSingle, VMCluster, VMAgent, VMAlert.

By default, the operator converts all existing prometheus-operator API objects into corresponding VictoriaMetrics Operator objects.

To enable metrics collection for kubernetes this chart installs multiple scrape configurations for kubernetes components like kubelet and kube-proxy, etc. Metrics collection is done by VMAgent. So if want to ship metrics to external VictoriaMetrics database you can disable VMSingle installation by setting vmsingle.enabled to false and setting vmagent.vmagentSpec.remoteWrite.url to your external VictoriaMetrics database.

This chart also installs bunch of dashboards and recording rules from kube-prometheus project.

Configuration

Configuration of this chart is done through helm values.

Dependencies

Dependencies can be enabled or disabled by setting enabled to true or false in values.yaml file.

!Important: for dependency charts anything that you can find in values.yaml of dependency chart can be configured in this chart under key for that dependency. For example if you want to configure grafana you can find all possible configuration options in values.yaml and you should set them in values for this chart under grafana: key. For example if you want to configure grafana.persistence.enabled you should set it in values.yaml like this:

#################################################

### dependencies #####

#################################################

# Grafana dependency chart configuration. For possible values refer to https://github.com/grafana/helm-charts/tree/main/charts/grafana#configuration

grafana:

enabled: true

persistence:

type: pvc

enabled: false

VictoriaMetrics components

This chart installs multiple VictoriaMetrics components using Custom Resources that are managed by victoria-metrics-operator

Each resource can be configured using spec of that resource from API docs of victoria-metrics-operator. For example if you want to configure VMAgent you can find all possible configuration options in API docs and you should set them in values for this chart under vmagent.spec key. For example if you want to configure remoteWrite.url you should set it in values.yaml like this:

vmagent:

spec:

remoteWrite:

- url: "https://insert.vmcluster.domain.com/insert/0/prometheus/api/v1/write"

ArgoCD issues

Operator self signed certificates

When deploying K8s stack using ArgoCD without Cert Manager (.Values.victoria-metrics-operator.admissionWebhooks.certManager.enabled: false)

it will rerender operator's webhook certificates on each sync since Helm lookup function is not respected by ArgoCD.

To prevent this please update you K8s stack Application spec.syncPolicy and spec.ignoreDifferences with a following:

apiVersion: argoproj.io/v1alpha1

kind: Application

...

spec:

...

syncPolicy:

syncOptions:

# https://argo-cd.readthedocs.io/en/stable/user-guide/sync-options/#respect-ignore-difference-configs

# argocd must also ignore difference during apply stage

# otherwise it ll silently override changes and cause a problem

- RespectIgnoreDifferences=true

ignoreDifferences:

- group: ""

kind: Secret

name: <fullname>-validation

namespace: kube-system

jsonPointers:

- /data

- group: admissionregistration.k8s.io

kind: ValidatingWebhookConfiguration

name: <fullname>-admission

jqPathExpressions:

- '.webhooks[]?.clientConfig.caBundle'

where <fullname> is output of {{ include "vm-operator.fullname" }} for your setup

metadata.annotations: Too long: must have at most 262144 bytes on dashboards

If one of dashboards ConfigMap is failing with error Too long: must have at most 262144 bytes, please make sure you've added argocd.argoproj.io/sync-options: ServerSideApply=true annotation to your dashboards:

grafana:

sidecar:

dashboards:

additionalDashboardAnnotations

argocd.argoproj.io/sync-options: ServerSideApply=true

argocd.argoproj.io/sync-options: ServerSideApply=true

Rules and dashboards

This chart by default install multiple dashboards and recording rules from kube-prometheus

you can disable dashboards with defaultDashboardsEnabled: false and experimentalDashboardsEnabled: false

and rules can be configured under defaultRules

Adding external dashboards

By default, this chart uses sidecar in order to provision default dashboards. If you want to add you own dashboards there are two ways to do it:

- Add dashboards by creating a ConfigMap. An example ConfigMap:

apiVersion: v1

kind: ConfigMap

metadata:

labels:

grafana_dashboard: "1"

name: grafana-dashboard

data:

dashboard.json: |-

{...}

- Use init container provisioning. Note that this option requires disabling sidecar and will remove all default dashboards provided with this chart. An example configuration:

grafana:

sidecar:

dashboards:

enabled: true

dashboards:

vmcluster:

gnetId: 11176

revision: 38

datasource: VictoriaMetrics

When using this approach, you can find dashboards for VictoriaMetrics components published here.

Prometheus scrape configs

This chart installs multiple scrape configurations for kubernetes monitoring. They are configured under #ServiceMonitors section in values.yaml file. For example if you want to configure scrape config for kubelet you should set it in values.yaml like this:

kubelet:

enabled: true

# spec for VMNodeScrape crd

# https://docs.victoriametrics.com/operator/api#vmnodescrapespec

spec:

interval: "30s"

Using externally managed Grafana

If you want to use an externally managed Grafana instance but still want to use the dashboards provided by this chart you can set

grafana.enabled to false and set defaultDashboardsEnabled to true. This will install the dashboards

but will not install Grafana.

For example:

defaultDashboardsEnabled: true

grafana:

enabled: false

This will create ConfigMaps with dashboards to be imported into Grafana.

If additional configuration for labels or annotations is needed in order to import dashboard to an existing Grafana you can

set .grafana.sidecar.dashboards.additionalDashboardLabels or .grafana.sidecar.dashboards.additionalDashboardAnnotations in values.yaml:

For example:

defaultDashboardsEnabled: true

grafana:

enabled: false

sidecar:

dashboards:

additionalDashboardLabels:

key: value

additionalDashboardAnnotations:

key: value

Prerequisites

-

Install the follow packages:

git,kubectl,helm,helm-docs. See this tutorial. -

Add dependency chart repositories

helm repo add grafana https://grafana.github.io/helm-charts

helm repo add prometheus-community https://prometheus-community.github.io/helm-charts

helm repo update

- PV support on underlying infrastructure.

How to install

Access a Kubernetes cluster.

Setup chart repository (can be omitted for OCI repositories)

Add a chart helm repository with follow commands:

helm repo add vm https://victoriametrics.github.io/helm-charts/

helm repo update

List versions of vm/victoria-metrics-k8s-stack chart available to installation:

helm search repo vm/victoria-metrics-k8s-stack -l

Install victoria-metrics-k8s-stack chart

Export default values of victoria-metrics-k8s-stack chart to file values.yaml:

-

For HTTPS repository

helm show values vm/victoria-metrics-k8s-stack > values.yaml -

For OCI repository

helm show values oci://ghcr.io/victoriametrics/helm-charts/victoria-metrics-k8s-stack > values.yaml

Change the values according to the need of the environment in values.yaml file.

Test the installation with command:

-

For HTTPS repository

helm install vmks vm/victoria-metrics-k8s-stack -f values.yaml -n NAMESPACE --debug --dry-run -

For OCI repository

helm install vmks oci://ghcr.io/victoriametrics/helm-charts/victoria-metrics-k8s-stack -f values.yaml -n NAMESPACE --debug --dry-run

Install chart with command:

-

For HTTPS repository

helm install vmks vm/victoria-metrics-k8s-stack -f values.yaml -n NAMESPACE -

For OCI repository

helm install vmks oci://ghcr.io/victoriametrics/helm-charts/victoria-metrics-k8s-stack -f values.yaml -n NAMESPACE

Get the pods lists by running this commands:

kubectl get pods -A | grep 'vmks'

Get the application by running this command:

helm list -f vmks -n NAMESPACE

See the history of versions of vmks application with command.

helm history vmks -n NAMESPACE

Install locally (Minikube)

To run VictoriaMetrics stack locally it's possible to use Minikube. To avoid dashboards and alert rules issues please follow the steps below:

Run Minikube cluster

minikube start --container-runtime=containerd --extra-config=scheduler.bind-address=0.0.0.0 --extra-config=controller-manager.bind-address=0.0.0.0 --extra-config=etcd.listen-metrics-urls=http://0.0.0.0:2381

Install helm chart

helm install [RELEASE_NAME] vm/victoria-metrics-k8s-stack -f values.yaml -f values.minikube.yaml -n NAMESPACE --debug --dry-run

How to uninstall

Remove application with command.

helm uninstall vmks -n NAMESPACE

CRDs created by this chart are not removed by default and should be manually cleaned up:

kubectl get crd | grep victoriametrics.com | awk '{print $1 }' | xargs -i kubectl delete crd {}

Troubleshooting

- If you cannot install helm chart with error

configmap already exist. It could happen because of name collisions, if you set too long release name. Kubernetes by default, allows only 63 symbols at resource names and all resource names are trimmed by helm to 63 symbols. To mitigate it, use shorter name for helm chart release name, like:

# stack - is short enough

helm upgrade -i stack vm/victoria-metrics-k8s-stack

Or use override for helm chart release name:

helm upgrade -i some-very-long-name vm/victoria-metrics-k8s-stack --set fullnameOverride=stack

Upgrade guide

Usually, helm upgrade doesn't requires manual actions. Just execute command:

$ helm upgrade [RELEASE_NAME] vm/victoria-metrics-k8s-stack

But release with CRD update can only be patched manually with kubectl. Since helm does not perform a CRD update, we recommend that you always perform this when updating the helm-charts version:

# 1. check the changes in CRD

$ helm show crds vm/victoria-metrics-k8s-stack --version [YOUR_CHART_VERSION] | kubectl diff -f -

# 2. apply the changes (update CRD)

$ helm show crds vm/victoria-metrics-k8s-stack --version [YOUR_CHART_VERSION] | kubectl apply -f - --server-side

All other manual actions upgrades listed below:

Upgrade to 0.13.0

- node-exporter starting from version 4.0.0 is using the Kubernetes recommended labels. Therefore you have to delete the daemonset before you upgrade.

kubectl delete daemonset -l app=prometheus-node-exporter

-

scrape configuration for kubernetes components was moved from

vmServiceScrape.specsection tospecsection. If you previously modified scrape configuration you need to update yourvalues.yaml -

grafana.defaultDashboardsEnabledwas renamed todefaultDashboardsEnabled(moved to top level). You may need to update it in yourvalues.yaml

Upgrade to 0.6.0

All CRD must be update to the lastest version with command:

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/helm-charts/master/charts/victoria-metrics-k8s-stack/crds/crd.yaml

Upgrade to 0.4.0

All CRD must be update to v1 version with command:

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/helm-charts/master/charts/victoria-metrics-k8s-stack/crds/crd.yaml

Upgrade from 0.2.8 to 0.2.9

Update VMAgent crd

command:

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/operator/v0.16.0/config/crd/bases/operator.victoriametrics.com_vmagents.yaml

Upgrade from 0.2.5 to 0.2.6

New CRD added to operator - VMUser and VMAuth, new fields added to exist crd.

Manual commands:

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/operator/v0.15.0/config/crd/bases/operator.victoriametrics.com_vmusers.yaml

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/operator/v0.15.0/config/crd/bases/operator.victoriametrics.com_vmauths.yaml

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/operator/v0.15.0/config/crd/bases/operator.victoriametrics.com_vmalerts.yaml

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/operator/v0.15.0/config/crd/bases/operator.victoriametrics.com_vmagents.yaml

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/operator/v0.15.0/config/crd/bases/operator.victoriametrics.com_vmsingles.yaml

kubectl apply -f https://raw.githubusercontent.com/VictoriaMetrics/operator/v0.15.0/config/crd/bases/operator.victoriametrics.com_vmclusters.yaml

Documentation of Helm Chart

Install helm-docs following the instructions on this tutorial.

Generate docs with helm-docs command.

cd charts/victoria-metrics-k8s-stack

helm-docs

The markdown generation is entirely go template driven. The tool parses metadata from charts and generates a number of sub-templates that can be referenced in a template file (by default README.md.gotmpl). If no template file is provided, the tool has a default internal template that will generate a reasonably formatted README.

Parameters

The following tables lists the configurable parameters of the chart and their default values.

Change the values according to the need of the environment in victoria-metrics-k8s-stack/values.yaml file.

| Key | Type | Default | Description |

|---|---|---|---|

| additionalVictoriaMetricsMap | string | |

Provide custom recording or alerting rules to be deployed into the cluster. |

| alertmanager.annotations | object | |

Alertmanager annotations |

| alertmanager.config | object | |

Alertmanager configuration |

| alertmanager.enabled | bool | |

Create VMAlertmanager CR |

| alertmanager.ingress | object | |

Alertmanager ingress configuration |

| alertmanager.ingress.extraPaths | list | |

Extra paths to prepend to every host configuration. This is useful when working with annotation based services. |

| alertmanager.monzoTemplate | object | |

Better alert templates for slack source |

| alertmanager.spec | object | |

Full spec for VMAlertmanager CRD. Allowed values described here |

| alertmanager.spec.configSecret | string | |

If this one defined, it will be used for alertmanager configuration and config parameter will be ignored |

| alertmanager.templateFiles | object | |

Extra alert templates |

| argocdReleaseOverride | string | |

If this chart is used in “Argocd” with “releaseName” field then VMServiceScrapes couldn’t select the proper services. For correct working need set value ‘argocdReleaseOverride=$ARGOCD_APP_NAME’ |

| coreDns.enabled | bool | |

Enabled CoreDNS metrics scraping |

| coreDns.service.enabled | bool | |

Create service for CoreDNS metrics |

| coreDns.service.port | int | |

CoreDNS service port |

| coreDns.service.selector | object | |

CoreDNS service pod selector |

| coreDns.service.targetPort | int | |

CoreDNS service target port |

| coreDns.vmScrape | object | |

Spec for VMServiceScrape CRD is here |

| defaultDashboards.annotations | object | |

|

| defaultDashboards.dashboards | object | |

Create dashboards as ConfigMap despite dependency it requires is not installed |

| defaultDashboards.dashboards.node-exporter-full | object | |

In ArgoCD using client-side apply this dashboard reaches annotations size limit and causes k8s issues without server side apply See this issue |

| defaultDashboards.defaultTimezone | string | |

|

| defaultDashboards.enabled | bool | |

Enable custom dashboards installation |

| defaultDashboards.grafanaOperator.enabled | bool | |

Create dashboards as CRDs (reuqires grafana-operator to be installed) |

| defaultDashboards.grafanaOperator.spec.allowCrossNamespaceImport | bool | |

|

| defaultDashboards.grafanaOperator.spec.instanceSelector.matchLabels.dashboards | string | |

|

| defaultDashboards.labels | object | |

|

| defaultDatasources.alertmanager | object | |

List of alertmanager datasources. Alertmanager generated |

| defaultDatasources.alertmanager.perReplica | bool | |

Create per replica alertmanager compatible datasource |

| defaultDatasources.extra | list | |

Configure additional grafana datasources (passed through tpl). Check here for details |

| defaultDatasources.victoriametrics.datasources | list | |

List of prometheus compatible datasource configurations. VM |

| defaultDatasources.victoriametrics.perReplica | bool | |

Create per replica prometheus compatible datasource |

| defaultRules | object | |

Create default rules for monitoring the cluster |

| defaultRules.alerting | object | |

Common properties for VMRules alerts |

| defaultRules.alerting.spec.annotations | object | |

Additional annotations for VMRule alerts |

| defaultRules.alerting.spec.labels | object | |

Additional labels for VMRule alerts |

| defaultRules.annotations | object | |

Annotations for default rules |

| defaultRules.group | object | |

Common properties for VMRule groups |

| defaultRules.group.spec.params | object | |

Optional HTTP URL parameters added to each rule request |

| defaultRules.groups | object | |

Rule group properties |

| defaultRules.groups.etcd.rules | object | |

Common properties for all rules in a group |

| defaultRules.labels | object | |

Labels for default rules |

| defaultRules.recording | object | |

Common properties for VMRules recording rules |

| defaultRules.recording.spec.annotations | object | |

Additional annotations for VMRule recording rules |

| defaultRules.recording.spec.labels | object | |

Additional labels for VMRule recording rules |

| defaultRules.rule | object | |

Common properties for all VMRules |

| defaultRules.rule.spec.annotations | object | |

Additional annotations for all VMRules |

| defaultRules.rule.spec.labels | object | |

Additional labels for all VMRules |

| defaultRules.rules | object | |

Per rule properties |

| defaultRules.runbookUrl | string | |

Runbook url prefix for default rules |

| externalVM | object | |

External VM read and write URLs |

| extraObjects | list | |

Add extra objects dynamically to this chart |

| fullnameOverride | string | |

Resource full name prefix override |

| global.clusterLabel | string | |

Cluster label to use for dashboards and rules |

| global.license | object | |

Global license configuration |

| grafana | object | |

Grafana dependency chart configuration. For possible values refer here |

| grafana.forceDeployDatasource | bool | |

Create datasource configmap even if grafana deployment has been disabled |

| grafana.ingress.extraPaths | list | |

Extra paths to prepend to every host configuration. This is useful when working with annotation based services. |

| grafana.vmScrape | object | |

Grafana VM scrape config |

| grafana.vmScrape.spec | object | |

Scrape configuration for Grafana |

| kube-state-metrics | object | |

kube-state-metrics dependency chart configuration. For possible values check here |

| kube-state-metrics.vmScrape | object | |

Scrape configuration for Kube State Metrics |

| kubeApiServer.enabled | bool | |

Enable Kube Api Server metrics scraping |

| kubeApiServer.vmScrape | object | |

Spec for VMServiceScrape CRD is here |

| kubeControllerManager.enabled | bool | |

Enable kube controller manager metrics scraping |

| kubeControllerManager.endpoints | list | |

If your kube controller manager is not deployed as a pod, specify IPs it can be found on |

| kubeControllerManager.service.enabled | bool | |

Create service for kube controller manager metrics scraping |

| kubeControllerManager.service.port | int | |

Kube controller manager service port |

| kubeControllerManager.service.selector | object | |

Kube controller manager service pod selector |

| kubeControllerManager.service.targetPort | int | |

Kube controller manager service target port |

| kubeControllerManager.vmScrape | object | |

Spec for VMServiceScrape CRD is here |

| kubeDns.enabled | bool | |

Enabled KubeDNS metrics scraping |

| kubeDns.service.enabled | bool | |

Create Service for KubeDNS metrics |

| kubeDns.service.ports | object | |

KubeDNS service ports |

| kubeDns.service.selector | object | |

KubeDNS service pods selector |

| kubeDns.vmScrape | object | |

Spec for VMServiceScrape CRD is here |

| kubeEtcd.enabled | bool | |

Enabled KubeETCD metrics scraping |

| kubeEtcd.endpoints | list | |

If your etcd is not deployed as a pod, specify IPs it can be found on |

| kubeEtcd.service.enabled | bool | |

Enable service for ETCD metrics scraping |

| kubeEtcd.service.port | int | |

ETCD service port |

| kubeEtcd.service.selector | object | |

ETCD service pods selector |

| kubeEtcd.service.targetPort | int | |

ETCD service target port |

| kubeEtcd.vmScrape | object | |

Spec for VMServiceScrape CRD is here |

| kubeProxy.enabled | bool | |

Enable kube proxy metrics scraping |

| kubeProxy.endpoints | list | |

If your kube proxy is not deployed as a pod, specify IPs it can be found on |

| kubeProxy.service.enabled | bool | |

Enable service for kube proxy metrics scraping |

| kubeProxy.service.port | int | |

Kube proxy service port |

| kubeProxy.service.selector | object | |

Kube proxy service pod selector |

| kubeProxy.service.targetPort | int | |

Kube proxy service target port |

| kubeProxy.vmScrape | object | |

Spec for VMServiceScrape CRD is here |

| kubeScheduler.enabled | bool | |

Enable KubeScheduler metrics scraping |

| kubeScheduler.endpoints | list | |

If your kube scheduler is not deployed as a pod, specify IPs it can be found on |

| kubeScheduler.service.enabled | bool | |

Enable service for KubeScheduler metrics scrape |

| kubeScheduler.service.port | int | |

KubeScheduler service port |

| kubeScheduler.service.selector | object | |

KubeScheduler service pod selector |

| kubeScheduler.service.targetPort | int | |

KubeScheduler service target port |

| kubeScheduler.vmScrape | object | |

Spec for VMServiceScrape CRD is here |

| kubelet | object | |

Component scraping the kubelets |

| kubelet.vmScrape | object | |

Spec for VMNodeScrape CRD is here |

| kubelet.vmScrapes.cadvisor | object | |

Enable scraping /metrics/cadvisor from kubelet’s service |

| kubelet.vmScrapes.probes | object | |

Enable scraping /metrics/probes from kubelet’s service |

| nameOverride | string | |

Resource full name suffix override |

| prometheus-node-exporter | object | |

prometheus-node-exporter dependency chart configuration. For possible values check here |

| prometheus-node-exporter.vmScrape | object | |

Node Exporter VM scrape config |

| prometheus-node-exporter.vmScrape.spec | object | |

Scrape configuration for Node Exporter |

| prometheus-operator-crds | object | |

Install prometheus operator CRDs |

| serviceAccount.annotations | object | |

Annotations to add to the service account |

| serviceAccount.create | bool | |

Specifies whether a service account should be created |

| serviceAccount.name | string | |

The name of the service account to use. If not set and create is true, a name is generated using the fullname template |

| tenant | string | |

Tenant to use for Grafana datasources and remote write |

| victoria-metrics-operator | object | |

VictoriaMetrics Operator dependency chart configuration. More values can be found here. Also checkout here possible ENV variables to configure operator behaviour |

| victoria-metrics-operator.crds.plain | bool | |

added temporary, till new operator version released |

| victoria-metrics-operator.operator.disable_prometheus_converter | bool | |

By default, operator converts prometheus-operator objects. |

| vmagent.additionalRemoteWrites | list | |

Remote write configuration of VMAgent, allowed parameters defined in a spec |

| vmagent.annotations | object | |

VMAgent annotations |

| vmagent.enabled | bool | |

Create VMAgent CR |

| vmagent.ingress | object | |

VMAgent ingress configuration |

| vmagent.spec | object | |

Full spec for VMAgent CRD. Allowed values described here |

| vmalert.additionalNotifierConfigs | object | |

Allows to configure static notifiers, discover notifiers via Consul and DNS, see specification here. This configuration will be created as separate secret and mounted to VMAlert pod. |

| vmalert.annotations | object | |

VMAlert annotations |

| vmalert.enabled | bool | |

Create VMAlert CR |

| vmalert.ingress | object | |

VMAlert ingress config |

| vmalert.ingress.extraPaths | list | |

Extra paths to prepend to every host configuration. This is useful when working with annotation based services. |

| vmalert.remoteWriteVMAgent | bool | |

Controls whether VMAlert should use VMAgent or VMInsert as a target for remotewrite |

| vmalert.spec | object | |

Full spec for VMAlert CRD. Allowed values described here |

| vmalert.templateFiles | object | |

Extra VMAlert annotation templates |

| vmauth.annotations | object | |

VMAuth annotations |

| vmauth.enabled | bool | |

Enable VMAuth CR |

| vmauth.spec | object | |

Full spec for VMAuth CRD. Allowed values described here |

| vmcluster.annotations | object | |

VMCluster annotations |

| vmcluster.enabled | bool | |

Create VMCluster CR |

| vmcluster.ingress.insert.annotations | object | |

Ingress annotations |

| vmcluster.ingress.insert.enabled | bool | |

Enable deployment of ingress for server component |

| vmcluster.ingress.insert.extraPaths | list | |

Extra paths to prepend to every host configuration. This is useful when working with annotation based services. |

| vmcluster.ingress.insert.hosts | list | |

Array of host objects |

| vmcluster.ingress.insert.ingressClassName | string | |

Ingress controller class name |

| vmcluster.ingress.insert.labels | object | |

Ingress extra labels |

| vmcluster.ingress.insert.path | string | |

Ingress default path |

| vmcluster.ingress.insert.pathType | string | |

Ingress path type |

| vmcluster.ingress.insert.tls | list | |

Array of TLS objects |

| vmcluster.ingress.select.annotations | object | |

Ingress annotations |

| vmcluster.ingress.select.enabled | bool | |

Enable deployment of ingress for server component |

| vmcluster.ingress.select.extraPaths | list | |

Extra paths to prepend to every host configuration. This is useful when working with annotation based services. |

| vmcluster.ingress.select.hosts | list | |

Array of host objects |

| vmcluster.ingress.select.ingressClassName | string | |

Ingress controller class name |

| vmcluster.ingress.select.labels | object | |

Ingress extra labels |

| vmcluster.ingress.select.path | string | |

Ingress default path |

| vmcluster.ingress.select.pathType | string | |

Ingress path type |

| vmcluster.ingress.select.tls | list | |

Array of TLS objects |

| vmcluster.ingress.storage.annotations | object | |

Ingress annotations |

| vmcluster.ingress.storage.enabled | bool | |

Enable deployment of ingress for server component |

| vmcluster.ingress.storage.extraPaths | list | |

Extra paths to prepend to every host configuration. This is useful when working with annotation based services. |

| vmcluster.ingress.storage.hosts | list | |

Array of host objects |

| vmcluster.ingress.storage.ingressClassName | string | |

Ingress controller class name |

| vmcluster.ingress.storage.labels | object | |

Ingress extra labels |

| vmcluster.ingress.storage.path | string | |

Ingress default path |

| vmcluster.ingress.storage.pathType | string | |

Ingress path type |

| vmcluster.ingress.storage.tls | list | |

Array of TLS objects |

| vmcluster.spec | object | |

Full spec for VMCluster CRD. Allowed values described here |

| vmcluster.spec.retentionPeriod | string | |

Data retention period. Possible units character: h(ours), d(ays), w(eeks), y(ears), if no unit character specified - month. The minimum retention period is 24h. See these docs |

| vmsingle.annotations | object | |

VMSingle annotations |

| vmsingle.enabled | bool | |

Create VMSingle CR |

| vmsingle.ingress.annotations | object | |

Ingress annotations |

| vmsingle.ingress.enabled | bool | |

Enable deployment of ingress for server component |

| vmsingle.ingress.extraPaths | list | |

Extra paths to prepend to every host configuration. This is useful when working with annotation based services. |

| vmsingle.ingress.hosts | list | |

Array of host objects |

| vmsingle.ingress.ingressClassName | string | |

Ingress controller class name |

| vmsingle.ingress.labels | object | |

Ingress extra labels |

| vmsingle.ingress.path | string | |

Ingress default path |

| vmsingle.ingress.pathType | string | |

Ingress path type |

| vmsingle.ingress.tls | list | |

Array of TLS objects |

| vmsingle.spec | object | |

Full spec for VMSingle CRD. Allowed values describe here |

| vmsingle.spec.retentionPeriod | string | |

Data retention period. Possible units character: h(ours), d(ays), w(eeks), y(ears), if no unit character specified - month. The minimum retention period is 24h. See these docs |