184 KiB

| weight | menu | title | aliases | |||||||

|---|---|---|---|---|---|---|---|---|---|---|

| 3 |

|

vmagent |

|

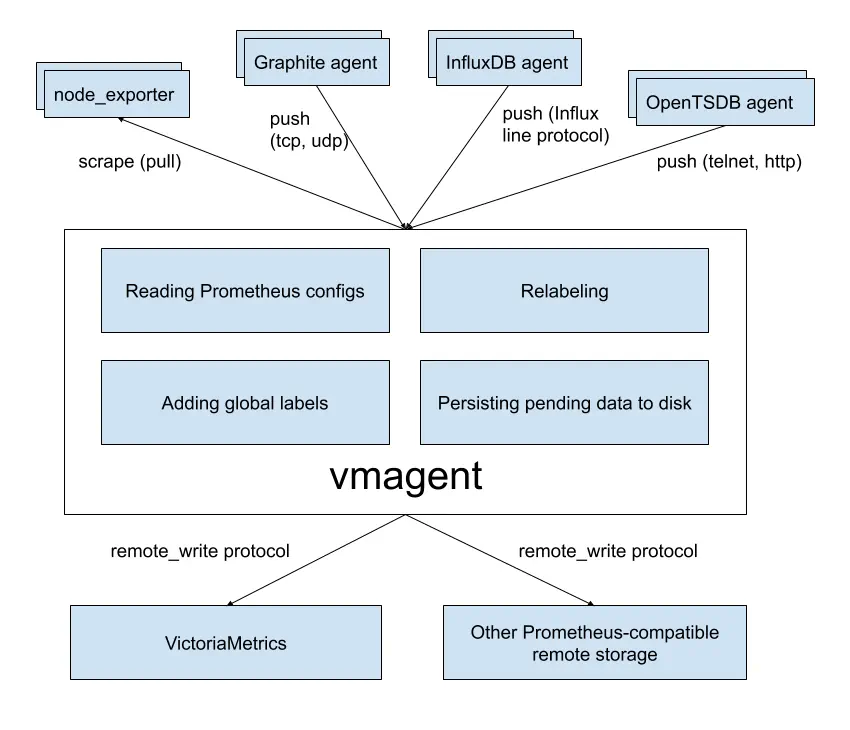

vmagent is a tiny agent which helps you collect metrics from various sources,

relabel and filter the collected metrics

and store them in VictoriaMetrics

or any other storage systems via Prometheus remote_write protocol

or via VictoriaMetrics remote_write protocol.

See Quick Start for details.

Motivation

While VictoriaMetrics provides an efficient solution to store and observe metrics, our users needed something fast

and RAM friendly to scrape metrics from Prometheus-compatible exporters into VictoriaMetrics.

Also, we found that our user's infrastructure are like snowflakes in that no two are alike. Therefore, we decided to add more flexibility

to vmagent such as the ability to accept metrics via popular push protocols

additionally to discovering Prometheus-compatible targets and scraping metrics from them.

Features

- Can be used as a drop-in replacement for Prometheus for discovering and scraping targets such as node_exporter.

Note that single-node VictoriaMetrics can also discover and scrape Prometheus-compatible targets in the same way as

vmagentdoes - see these docs. - Can add, remove and modify labels (aka tags) via Prometheus relabeling. Can filter data before sending it to remote storage. See these docs for details.

- Can accept data via all the ingestion protocols supported by VictoriaMetrics - see these docs.

- Can aggregate incoming samples by time and by labels before sending them to remote storage - see these docs.

- Can replicate collected metrics simultaneously to multiple Prometheus-compatible remote storage systems - see these docs.

- Can save egress network bandwidth usage costs when VictoriaMetrics remote write protocol is used for sending the data to VictoriaMetrics.

- Works smoothly in environments with unstable connections to remote storage. If the remote storage is unavailable, the collected metrics

are buffered at

-remoteWrite.tmpDataPath. The buffered metrics are sent to remote storage as soon as the connection to the remote storage is repaired. The maximum disk usage for the buffer can be limited with-remoteWrite.maxDiskUsagePerURL. - Uses lower amounts of RAM, CPU, disk IO and network bandwidth than Prometheus.

- Scrape targets can be spread among multiple

vmagentinstances when big number of targets must be scraped. See these docs. - Can load scrape configs from multiple files. See these docs.

- Can efficiently scrape targets that expose millions of time series such as /federate endpoint in Prometheus. See these docs.

- Can deal with high cardinality and high churn rate issues by limiting the number of unique time series at scrape time and before sending them to remote storage systems. See these docs.

- Can write collected metrics to multiple tenants. See these docs.

- Can read and write data from / to Kafka. See these docs.

- Can read and write data from / to Google PubSub. See these docs.

Quick Start

Please download vmutils-* archive from releases page (

vmagent is also available in docker images),

unpack it and pass the following flags to the vmagent binary in order to start scraping Prometheus-compatible targets

and sending the data to the Prometheus-compatible remote storage:

-promscrape.configwith the path to Prometheus config file (usually located at/etc/prometheus/prometheus.yml). The path can point either to local file or to http url. See scrape config examples.vmagentdoesn't support some sections of Prometheus config file, so you may need either to delete these sections or to runvmagentwith-promscrape.config.strictParse=falsecommand-line flag. In this casevmagentignores unsupported sections. See the list of unsupported sections.-remoteWrite.urlwith Prometheus-compatible remote storage endpoint such as VictoriaMetrics, where to send the data to. The-remoteWrite.urlmay refer to DNS SRV address. See these docs for details.

Example command for writing the data received via supported push-based protocols

to single-node VictoriaMetrics located at victoria-metrics-host:8428:

/path/to/vmagent -remoteWrite.url=https://victoria-metrics-host:8428/api/v1/write

See these docs if you need writing the data to VictoriaMetrics cluster.

Example command for scraping Prometheus targets and writing the data to single-node VictoriaMetrics:

/path/to/vmagent -promscrape.config=/path/to/prometheus.yml -remoteWrite.url=https://victoria-metrics-host:8428/api/v1/write

See how to scrape Prometheus-compatible targets for more details.

If you use single-node VictoriaMetrics, then you can discover and scrape Prometheus-compatible targets directly from VictoriaMetrics

without the need to use vmagent - see these docs.

vmagent can save network bandwidth usage costs under high load when VictoriaMetrics remote write protocol is used.

See troubleshooting docs if you encounter common issues with vmagent.

See various use cases for vmagent.

Pass -help to vmagent in order to see the full list of supported command-line flags with their descriptions.

How to push data to vmagent

vmagent supports the same set of push-based data ingestion protocols as VictoriaMetrics does

additionally to pull-based Prometheus-compatible targets' scraping:

- DataDog "submit metrics" API. See these docs.

- InfluxDB line protocol via

http://<vmagent>:8429/write. See these docs. - Graphite plaintext protocol if

-graphiteListenAddrcommand-line flag is set. See these docs. - OpenTelemetry http API. See these docs.

- NewRelic API. See these docs.

- OpenTSDB telnet and http protocols if

-opentsdbListenAddrcommand-line flag is set. See these docs. - Prometheus remote write protocol via

http://<vmagent>:8429/api/v1/write. - JSON lines import protocol via

http://<vmagent>:8429/api/v1/import. See these docs. - Native data import protocol via

http://<vmagent>:8429/api/v1/import/native. See these docs. - Prometheus exposition format via

http://<vmagent>:8429/api/v1/import/prometheus. See these docs for details. - Arbitrary CSV data via

http://<vmagent>:8429/api/v1/import/csv. See these docs.

Configuration update

vmagent should be restarted in order to update config options set via command-line args.

vmagent supports multiple approaches for reloading configs from updated config files such as

-promscrape.config, -remoteWrite.relabelConfig, -remoteWrite.urlRelabelConfig, -streamAggr.config

and -remoteWrite.streamAggr.config:

-

Sending

SIGHUPsignal tovmagentprocess:kill -SIGHUP `pidof vmagent` -

Sending HTTP request to

http://vmagent:8429/-/reloadendpoint. This endpoint can be protected with-reloadAuthKeycommand-line flag.

There is also -promscrape.configCheckInterval command-line flag, which can be used for automatic reloading configs from updated -promscrape.config file.

Use cases

IoT and Edge monitoring

vmagent can run and collect metrics in IoT environments and industrial networks with unreliable or scheduled connections to their remote storage.

It buffers the collected data in local files until the connection to remote storage becomes available and then sends the buffered

data to the remote storage. It re-tries sending the data to remote storage until errors are resolved.

The maximum on-disk size for the buffered metrics can be limited with -remoteWrite.maxDiskUsagePerURL.

vmagent works on various architectures from the IoT world - 32-bit arm, 64-bit arm, ppc64, 386, amd64.

The vmagent can save network bandwidth usage costs by using VictoriaMetrics remote write protocol.

Drop-in replacement for Prometheus

If you use Prometheus only for scraping metrics from various targets and forwarding these metrics to remote storage

then vmagent can replace Prometheus. Typically, vmagent requires lower amounts of RAM, CPU and network bandwidth compared with Prometheus.

See these docs for details.

Statsd alternative

vmagent can be used as an alternative to statsd

when stream aggregation is enabled.

See these docs for details.

Flexible metrics relay

vmagent can accept metrics in various popular data ingestion protocols, apply relabeling

to the accepted metrics (for example, change metric names/labels or drop unneeded metrics) and then forward the relabeled metrics

to other remote storage systems, which support Prometheus remote_write protocol (including other vmagent instances).

Replication and high availability

vmagent replicates the collected metrics among multiple remote storage instances configured via -remoteWrite.url args.

If a single remote storage instance temporarily is out of service, then the collected data remains available in another remote storage instance.

vmagent buffers the collected data in files at -remoteWrite.tmpDataPath until the remote storage becomes available again,

and then it sends the buffered data to the remote storage in order to prevent data gaps.

VictoriaMetrics cluster already supports replication,

so there is no need in specifying multiple -remoteWrite.url flags when writing data to the same cluster.

See these docs.

Sharding among remote storages

By default vmagent replicates data among remote storage systems enumerated via -remoteWrite.url command-line flag.

If the -remoteWrite.shardByURL command-line flag is set, then vmagent spreads evenly

the outgoing time series among all the remote storage systems

enumerated via -remoteWrite.url.

It is possible to replicate samples among remote storage systems by passing -remoteWrite.shardByURLReplicas=N

command-line flag to vmagent additionally to -remoteWrite.shardByURL command-line flag.

This instructs vmagent writing every outgoing sample to N distinct remote storage systems enumerated via -remoteWrite.url

in addition to sharding.

Samples for the same time series are routed to the same remote storage system if -remoteWrite.shardByURL flag is specified.

This allows building scalable data processing pipelines when a single remote storage cannot keep up with the data ingestion workload.

For example, this allows building horizontally scalable stream aggregation

by routing outgoing samples for the same time series of counter

and histogram types from top-level vmagent instances

to the same second-level vmagent instance, so they are aggregated properly.

If -remoteWrite.shardByURL command-line flag is set, then all the metric labels are used for even sharding

among remote storage systems specified in -remoteWrite.url.

Sometimes it may be needed to use only a particular set of labels for sharding. For example, it may be needed to route all the metrics with the same instance label

to the same -remoteWrite.url. In this case you can specify comma-separated list of these labels in the -remoteWrite.shardByURL.labels

command-line flag. For example, -remoteWrite.shardByURL.labels=instance,__name__ would shard metrics with the same name and instance

label to the same -remoteWrite.url.

Sometimes is may be needed ignoring some labels when sharding samples across multiple -remoteWrite.url backends.

For example, if all the raw samples with the same set of labels

except of instance and pod labels must be routed to the same backend. In this case the list of ignored labels must be passed to

-remoteWrite.shardByURL.ignoreLabels command-line flag: -remoteWrite.shardByURL.ignoreLabels=instance,pod.

See also how to scrape big number of targets.

Relabeling and filtering

vmagent can add, remove or update labels on the collected data before sending it to the remote storage. Additionally,

it can remove unwanted samples via Prometheus-like relabeling before sending the collected data to remote storage.

Please see these docs for details.

Splitting data streams among multiple systems

vmagent supports splitting the collected data between multiple destinations with the help of -remoteWrite.urlRelabelConfig,

which is applied independently for each configured -remoteWrite.url destination. For example, it is possible to replicate or split

data among long-term remote storage, short-term remote storage and a real-time analytical system built on top of Kafka.

Note that each destination can receive its own subset of the collected data due to per-destination relabeling via -remoteWrite.urlRelabelConfig.

For example, let's assume all the scraped or received metrics by vmagent have label env with values dev or prod.

To route metrics env=dev to destination dev and metrics with env=prod to destination prod apply the following config:

- Create relabeling config file

relabelDev.ymlto drop all metrics that don't have labelenv=dev:

- action: keep

source_labels: [env]

regex: "dev"

- Create relabeling config file

relabelProd.ymlto drop all metrics that don't have labelenv=prod:

- action: keep

source_labels: [env]

regex: "prod"

- Configure

vmagentwith 2-remoteWrite.urlflags pointing to destinationsdevandprodwith corresponding-remoteWrite.urlRelabelConfigconfigs:

./vmagent \

-remoteWrite.url=http://<dev-url> -remoteWrite.urlRelabelConfig=relabelDev.yml \

-remoteWrite.url=http://<prod-url> -remoteWrite.urlRelabelConfig=relabelProd.yml

With this configuration vmagent will forward to http://<dev-url> only metrics that have env=dev label.

And to http://<prod-url> it will forward only metrics that have env=prod label.

Please note, order of flags is important: 1st mentioned -remoteWrite.urlRelabelConfig will be applied to the

1st mentioned -remoteWrite.url, and so on.

Prometheus remote_write proxy

vmagent can be used as a proxy for Prometheus data sent via Prometheus remote_write protocol. It can accept data via the remote_write API

at the/api/v1/write endpoint. Then apply relabeling and filtering and proxy it to another remote_write system .

The vmagent can be configured to encrypt the incoming remote_write requests with -tls* command-line flags.

Also, Basic Auth can be enabled for the incoming remote_write requests with -httpAuth.* command-line flags.

remote_write for clustered version

While vmagent can accept data in several supported protocols (OpenTSDB, Influx, Prometheus, Graphite) and scrape data from various targets,

writes are always performed in Prometheus remote_write protocol. Therefore, for the clustered version,

the -remoteWrite.url command-line flag should be configured as <schema>://<vminsert-host>:8480/insert/<accountID>/prometheus/api/v1/write

according to these docs.

There is also support for multitenant writes. See these docs.

Flexible deduplication

Deduplication at stream aggregation allows setting up arbitrary complex de-duplication schemes for the collected samples. Examples:

-

The following command instructs

vmagentto send only the last sample per each seen time series every 60 seconds:./vmagent -remoteWrite.url=http://remote-storage/api/v1/write -remoteWrite.streamAggr.dedupInterval=60s -

The following command instructs

vmagentto merge time series with differentreplicalabel values and then to send only the last sample per each merged series per ever 60 seconds:./vmagent -remoteWrite=http://remote-storage/api/v1/write -streamAggr.dropInputLabels=replica -remoteWrite.streamAggr.dedupInterval=60s

SRV urls

If vmagent encounters urls with srv+ prefix in hostname (such as http://srv+some-addr/some/path), then it resolves some-addr DNS SRV

record into TCP address with hostname and TCP port, and then uses the resulting url when it needs connecting to it.

SRV urls are supported in the following places:

-

In

-remoteWrite.urlcommand-line flag. For example, ifvictoria-metricsDNS SRV record containsvictoria-metrics-host:8428TCP address, then-remoteWrite.url=http://srv+victoria-metrics/api/v1/writeis automatically resolved into-remoteWrite.url=http://victoria-metrics-host:8428/api/v1/write. If the DNS SRV record is resolved into multiple TCP addresses, thenvmagentuses randomly chosen address per each connection it establishes to the remote storage. -

In scrape target addresses aka

__address__label - see these docs for details. -

In urls used for service discovery.

SRV urls are useful when HTTP services run on different TCP ports or when they can change TCP ports over time (for instance, after the restart).

VictoriaMetrics remote write protocol

vmagent supports sending data to the configured -remoteWrite.url either via Prometheus remote write protocol

or via VictoriaMetrics remote write protocol.

VictoriaMetrics remote write protocol provides the following benefits comparing to Prometheus remote write protocol:

-

Reduced network bandwidth usage by 2x-5x. This allows saving network bandwidth usage costs when

vmagentand the configured remote storage systems are located in different datacenters, availability zones or regions. -

Reduced disk read/write IO and disk space usage at

vmagentwhen the remote storage is temporarily unavailable. In this casevmagentbuffers the incoming data to disk using the VictoriaMetrics remote write format. This reduces disk read/write IO and disk space usage by 2x-5x comparing to Prometheus remote write format.

vmagent automatically switches to VictoriaMetrics remote write protocol when it sends data to VictoriaMetrics components such as other vmagent instances,

single-node VictoriaMetrics

or vminsert at cluster version.

It is possible to force switch to VictoriaMetrics remote write protocol by specifying -remoteWrite.forceVMProto

command-line flag for the corresponding -remoteWrite.url.

It is possible to tune the compression level for VictoriaMetrics remote write protocol with -remoteWrite.vmProtoCompressLevel command-line flag.

Bigger values reduce network usage at the cost of higher CPU usage. Negative values reduce CPU usage at the cost of higher network usage.

The default value for the compression level is 0, the minimum value is -22 and the maximum value is 22. The default value works optimally

in most cases, so it isn't recommended changing it.

vmagent automatically switches to Prometheus remote write protocol when it sends data to old versions of VictoriaMetrics components

or to other Prometheus-compatible remote storage systems. It is possible to force switch to Prometheus remote write protocol

by specifying -remoteWrite.forcePromProto command-line flag for the corresponding -remoteWrite.url.

Multitenancy

By default vmagent collects the data without tenant identifiers

and routes it to the remote storage specified via -remoteWrite.url command-line flag. The -remoteWrite.url can point to /insert/<tenant_id>/prometheus/api/v1/write path

at vminsert according to these docs. In this case all the metrics are written to the given <tenant_id> tenant.

The easiest way to write data to multiple distinct tenants is to specify the needed tenants via vm_account_id and vm_project_id labels

and then to push metrics with these labels to multitenant url at VictoriaMetrics cluster.

The vm_account_id and vm_project_id labels can be specified via relabeling before sending the metrics to -remoteWrite.url.

For example, the following relabeling rule instructs sending metrics to <account_id>:0 tenant

defined in the prometheus.io/account_id annotation of Kubernetes pod deployment:

scrape_configs:

- kubernetes_sd_configs:

- role: pod

relabel_configs:

- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_account_id]

target_label: vm_account_id

vmagent can accept data via the same multitenant endpoints (/insert/<accountID>/<suffix>) as vminsert at VictoriaMetrics cluster

does according to these docs if -enableMultitenantHandlers command-line flag is set.

In this case, vmagent automatically converts tenant identifiers from the URL to vm_account_id and vm_project_id labels.

These tenant labels are added before applying relabeling specified via -remoteWrite.relabelConfig

and -remoteWrite.urlRelabelConfig command-line flags. Metrics with vm_account_id and vm_project_id labels can be routed to the corresponding tenants

when specifying -remoteWrite.url to multitenant url at VictoriaMetrics cluster.

How to collect metrics in Prometheus format

Specify the path to prometheus.yml file via -promscrape.config command-line flag. vmagent takes into account the following

sections from Prometheus config file:

globalscrape_configs

All other sections are ignored, including the remote_write section.

Use -remoteWrite.* command-line flag instead for configuring remote write settings. See the list of unsupported config sections.

The file pointed by -promscrape.config may contain %{ENV_VAR} placeholders which are substituted by the corresponding ENV_VAR environment variable values.

See also:

scrape_config enhancements

vmagent supports the following additional options in scrape_configs section:

headers- a list of HTTP headers to send to scrape target with each scrape request. This can be used when the scrape target needs custom authorization and authentication. For example:

scrape_configs:

- job_name: custom_headers

headers:

- "TenantID: abc"

- "My-Auth: TopSecret"

disable_compression: truefor disabling response compression on a per-job basis. By default,vmagentrequests compressed responses from scrape targets for saving network bandwidth.disable_keepalive: truefor disabling HTTP keep-alive connections on a per-job basis. By default,vmagentuses keep-alive connections to scrape targets for reducing overhead on connection re-establishing.series_limit: Nfor limiting the number of unique time series a single scrape target can expose. See these docs.stream_parse: truefor scraping targets in a streaming manner. This may be useful when targets export big number of metrics. See these docs.scrape_align_interval: durationfor aligning scrapes to the given interval instead of using random offset in the range[0 ... scrape_interval]for scraping each target. The random offset helps to spread scrapes evenly in time.scrape_offset: durationfor specifying the exact offset for scraping instead of using random offset in the range[0 ... scrape_interval].

See scrape_configs docs for more details on all the supported options.

Loading scrape configs from multiple files

vmagent supports loading scrape configs from multiple files specified

in the scrape_config_files section of -promscrape.config file. For example, the following -promscrape.config instructs vmagent

loading scrape configs from all the *.yml files under configs directory, from single_scrape_config.yml local file

and from https://config-server/scrape_config.yml url:

scrape_config_files:

- configs/*.yml

- single_scrape_config.yml

- https://config-server/scrape_config.yml

Every referred file can contain arbitrary number of supported scrape configs.

There is no need in specifying top-level scrape_configs section in these files. For example:

- job_name: foo

static_configs:

- targets: ["vmagent:8429"]

- job_name: bar

kubernetes_sd_configs:

- role: pod

vmagent is able to dynamically reload these files - see these docs.

Unsupported Prometheus config sections

vmagent doesn't support the following sections in Prometheus config file passed to -promscrape.config command-line flag:

- remote_write. This section is substituted

with various

-remoteWrite*command-line flags. See the full list of flags. Theremote_writesection isn't supported in order to reduce possible confusion whenvmagentis used for accepting incoming metrics via supported push protocols. In this case the-promscrape.configfile isn't needed. remote_read. This section isn't supported at all, sincevmagentdoesn't provide Prometheus querying API. It is expected that the querying API is provided by the remote storage specified via-remoteWrite.urlsuch as VictoriaMetrics. See Prometheus querying API docs for VictoriaMetrics.rule_filesandalerting. These sections are supported by vmalert.

The list of supported service discovery types is available here.

Additionally, vmagent doesn't support refresh_interval option at service discovery sections.

This option is substituted with -promscrape.*CheckInterval command-line flags, which are specific per each service discovery type.

See the full list of command-line flags for vmagent.

Adding labels to metrics

Extra labels can be added to metrics collected by vmagent via the following mechanisms:

-

The

global -> external_labelssection in-promscrape.configfile. These labels are added only to metrics scraped from targets configured in the-promscrape.configfile. They aren't added to metrics collected via other data ingestion protocols. -

The

-remoteWrite.labelcommand-line flag. These labels are added to all the collected metrics before sending them to-remoteWrite.url. For example, the following command startsvmagent, which adds{datacenter="foobar"}label to all the metrics pushed to all the configured remote storage systems (all the-remoteWrite.urlflag values):/path/to/vmagent -remoteWrite.label=datacenter=foobar ... -

Via relabeling. See these docs.

Automatically generated metrics

vmagent automatically generates the following metrics per each scrape of every Prometheus-compatible target

and attaches instance, job and other target-specific labels to these metrics:

-

up- this metric exposes1value on successful scrape and0value on unsuccessful scrape. This allows monitoring failing scrapes with the following MetricsQL query:up == 0 -

scrape_duration_seconds- the duration of the scrape for the given target. This allows monitoring slow scrapes. For example, the following MetricsQL query returns scrapes, which take more than 1.5 seconds to complete:scrape_duration_seconds > 1.5 -

scrape_timeout_seconds- the configured timeout for the current scrape target (akascrape_timeout). This allows detecting targets with scrape durations close to the configured scrape timeout. For example, the following MetricsQL query returns targets (identified byinstancelabel), which take more than 80% of the configuredscrape_timeoutduring scrapes:scrape_duration_seconds / scrape_timeout_seconds > 0.8 -

scrape_response_size_bytes- response size in bytes for the given target. This allows to monitor amount of data scraped and to adjustmax_scrape_sizeoption for scraped targets. For example, the following MetricsQL query returns targets with scrape response bigger than10MiB:scrape_response_size_bytes > 10MiB -

scrape_samples_scraped- the number of samples (aka metrics) parsed per each scrape. This allows detecting targets, which expose too many metrics. For example, the following MetricsQL query returns targets, which expose more than 10000 metrics:scrape_samples_scraped > 10000 -

scrape_samples_limit- the configured limit on the number of metrics the given target can expose. The limit can be set viasample_limitoption at scrape_configs. This metric is exposed only if thesample_limitis set. This allows detecting targets, which expose too many metrics compared to the configuredsample_limit. For example, the following query returns targets (identified byinstancelabel), which expose more than 80% metrics compared to the configuredsample_limit:scrape_samples_scraped / scrape_samples_limit > 0.8 -

scrape_samples_post_metric_relabeling- the number of samples (aka metrics) left after applying metric-level relabeling frommetric_relabel_configssection (see relabeling docs for more details). This allows detecting targets with too many metrics after the relabeling. For example, the following MetricsQL query returns targets with more than 10000 metrics after the relabeling:scrape_samples_post_metric_relabeling > 10000 -

scrape_series_added- an approximate number of new series the given target generates during the current scrape. This metric allows detecting targets (identified byinstancelabel), which lead to high churn rate. For example, the following MetricsQL query returns targets, which generate more than 1000 new series during the last hour:sum_over_time(scrape_series_added[1h]) > 1000vmagentsetsscrape_series_addedto zero when it runs with-promscrape.noStaleMarkerscommand-line flag or when it scrapes target withno_stale_markers: trueoption, e.g. when staleness markers are disabled. -

scrape_series_limit- the limit on the number of unique time series the given target can expose according to these docs. This metric is exposed only if the series limit is set. -

scrape_series_current- the number of unique series the given target exposed so far. This metric is exposed only if the series limit is set according to these docs. This metric allows alerting when the number of exposed series by the given target reaches the limit. For example, the following query would alert when the target exposes more than 90% of unique series compared to the configured limit.scrape_series_current / scrape_series_limit > 0.9 -

scrape_series_limit_samples_dropped- exposes the number of dropped samples during the scrape because of the exceeded limit on the number of unique series. This metric is exposed only if the series limit is set according to these docs. This metric allows alerting when scraped samples are dropped because of the exceeded limit. For example, the following query alerts when at least a single sample is dropped because of the exceeded limit during the last hour:sum_over_time(scrape_series_limit_samples_dropped[1h]) > 0

If the target exports metrics with names clashing with the automatically generated metric names, then vmagent automatically

adds exported_ prefix to these metric names, so they don't clash with automatically generated metric names.

Relabeling

VictoriaMetrics components support Prometheus-compatible relabeling

with additional enhancements. The relabeling can be defined in the following places processed by vmagent:

-

At the

scrape_config -> relabel_configssection in-promscrape.configfile. This relabeling is used for modifying labels in discovered targets and for dropping unneeded targets. See relabeling cookbook for details.This relabeling can be debugged by clicking the

debuglink at the corresponding target on thehttp://vmagent:8429/targetspage or on thehttp://vmagent:8429/service-discoverypage. See these docs for details. The link is unavailable ifvmagentruns with-promscrape.dropOriginalLabelscommand-line flag. -

At the

scrape_config -> metric_relabel_configssection in-promscrape.configfile. This relabeling is used for modifying labels in scraped metrics and for dropping unneeded metrics. See relabeling cookbook for details.This relabeling can be debugged via

http://vmagent:8429/metric-relabel-debugpage. See these docs for details. -

At the

-remoteWrite.relabelConfigfile. This relabeling is used for modifying labels for all the collected metrics (including metrics obtained via push-based protocols) and for dropping unneeded metrics before sending them to all the configured-remoteWrite.urladdresses.This relabeling can be debugged via

http://vmagent:8429/metric-relabel-debugpage. See these docs for details. -

At the

-remoteWrite.urlRelabelConfigfiles. This relabeling is used for modifying labels for metrics and for dropping unneeded metrics before sending them to the particular-remoteWrite.url.This relabeling can be debugged via

http://vmagent:8429/metric-relabel-debugpage. See these docs for details.

All the files with relabeling configs can contain special placeholders in the form %{ENV_VAR},

which are replaced by the corresponding environment variable values.

Streaming aggregation, if configured, is performed after applying all the relabeling stages mentioned above.

The following articles contain useful information about Prometheus relabeling:

- Cookbook for common relabeling tasks

- How to use Relabeling in Prometheus and VictoriaMetrics

- Life of a label

- Discarding targets and timeseries with relabeling

- Dropping labels at scrape time

- Extracting labels from legacy metric names

- relabel_configs vs metric_relabel_configs

Relabeling enhancements

vmagent provides the following enhancements on top of Prometheus-compatible relabeling:

-

The

replacementoption can refer arbitrary labels via{{label_name}}placeholders. Such placeholders are substituted with the corresponding label value. For example, the following relabeling rule setsinstance-joblabel value tohost123-foowhen applied to the metric with{instance="host123",job="foo"}labels:- target_label: "instance-job" replacement: "{{instance}}-{{job}}" -

An optional

iffilter can be used for conditional relabeling. Theiffilter may contain arbitrary time series selector. Theactionis performed only for samples, which match the providediffilter. For example, the following relabeling rule keeps metrics matchingfoo{bar="baz"}series selector, while dropping the rest of metrics:- if: 'foo{bar="baz"}' action: keepThis is equivalent to less clear Prometheus-compatible relabeling rule:

- action: keep source_labels: [__name__, bar] regex: 'foo;baz'The

ifoption may contain more than one filter. In this case theactionis performed if at least a single filter matches the given sample. For example, the following relabeling rule addsfoo="bar"label to samples withjob="foo"orinstance="bar"labels:- target_label: foo replacement: bar if: - '{job="foo"}' - '{instance="bar"}' -

The

regexvalue can be split into multiple lines for improved readability and maintainability. These lines are automatically joined with|char when parsed. For example, the following configs are equivalent:- action: keep_metrics regex: "metric_a|metric_b|foo_.+"- action: keep_metrics regex: - "metric_a" - "metric_b" - "foo_.+" -

VictoriaMetrics provides the following additional relabeling actions on top of standard actions from the Prometheus relabeling:

-

replace_allreplaces all the occurrences ofregexin the values ofsource_labelswith thereplacementand stores the results in thetarget_label. For example, the following relabeling config replaces all the occurrences of-char in metric names with_char (e.g.foo-bar-bazmetric name is transformed intofoo_bar_baz):- action: replace_all source_labels: ["__name__"] target_label: "__name__" regex: "-" replacement: "_" -

labelmap_allreplaces all the occurrences ofregexin all the label names with thereplacement. For example, the following relabeling config replaces all the occurrences of-char in all the label names with_char (e.g.foo-bar-bazlabel name is transformed intofoo_bar_baz):- action: labelmap_all regex: "-" replacement: "_" -

keep_if_equal: keeps the entry if all the label values fromsource_labelsare equal, while dropping all the other entries. For example, the following relabeling config keeps targets if they contain equal values forinstanceandhostlabels, while dropping all the other targets:- action: keep_if_equal source_labels: ["instance", "host"] -

drop_if_equal: drops the entry if all the label values fromsource_labelsare equal, while keeping all the other entries. For example, the following relabeling config drops targets if they contain equal values forinstanceandhostlabels, while keeping all the other targets:- action: drop_if_equal source_labels: ["instance", "host"] -

keep_if_contains: keeps the entry iftarget_labelcontains all the label values listed insource_labels, while dropping all the other entries. For example, the following relabeling config keeps targets if__meta_consul_tagscontains value from therequired_consul_taglabel:- action: keep_if_contains target_label: __meta_consul_tags source_labels: [required_consul_tag] -

drop_if_contains: drops the entry iftarget_labelcontains all the label values listed insource_labels, while keeping all the other entries. For example, the following relabeling config drops targets if__meta_consul_tagcontains value from thedenied_consul_taglabel:- action: drop_if_contains target_label: __meta_consul_tags source_labels: [denied_consul_tag] -

keep_metrics: keeps all the metrics with names matching the givenregex, while dropping all the other metrics. For example, the following relabeling config keeps metrics withfooandbarnames, while dropping all the other metrics:- action: keep_metrics regex: "foo|bar" -

drop_metrics: drops all the metrics with names matching the givenregex, while keeping all the other metrics. For example, the following relabeling config drops metrics withfooandbarnames, while leaving all the other metrics:- action: drop_metrics regex: "foo|bar" -

graphite: applies Graphite-style relabeling to metric name. See these docs for details.

-

Graphite relabeling

VictoriaMetrics components support action: graphite relabeling rules, which allow extracting various parts from Graphite-style metrics

into the configured labels with the syntax similar to Glob matching in statsd_exporter.

Note that the name field must be substituted with explicit __name__ option under labels section.

If __name__ option is missing under labels section, then the original Graphite-style metric name is left unchanged.

For example, the following relabeling rule generates requests_total{job="app42",instance="host124:8080"} metric

from app42.host123.requests.total Graphite-style metric:

- action: graphite

match: "*.*.*.total"

labels:

__name__: "${3}_total"

job: "$1"

instance: "${2}:8080"

Important notes about action: graphite relabeling rules:

- The relabeling rule is applied only to metrics, which match the given

matchexpression. Other metrics remain unchanged. - The

*matches the maximum possible number of chars until the next dot or until the next part of thematchexpression whichever comes first. It may match zero chars if the next char is.. For example,match: "app*foo.bar"matchesapp42foo.barand42becomes available to use atlabelssection via$1capture group. - The

$0capture group matches the original metric name. - The relabeling rules are executed in order defined in the original config.

The action: graphite relabeling rules are easier to write and maintain than action: replace for labels extraction from Graphite-style metric names.

Additionally, the action: graphite relabeling rules usually work much faster than the equivalent action: replace rules.

Relabel debug

vmagent and single-node VictoriaMetrics

provide the following tools for debugging target-level and metric-level relabeling:

-

Target-level debugging (e.g.

relabel_configssection at scrape_configs) can be performed by navigating tohttp://vmagent:8429/targetspage (http://victoriametrics:8428/targetspage for single-node VictoriaMetrics) and clicking thedebug target relabelinglink at the target, which must be debugged. The link is unavailable ifvmagentruns with-promscrape.dropOriginalLabelscommand-line flag. The opened page shows step-by-step results for the actual target relabeling rules applied to the discovered target labels. The page shows also the target URL generated after applying all the relabeling rules.The

http://vmagent:8429/targetspage shows only active targets. If you need to understand why some target is dropped during the relabeling, then navigate tohttp://vmagent:8428/service-discoverypage (http://victoriametrics:8428/service-discoveryfor single-node VictoriaMetrics), find the dropped target and click thedebuglink there. The link is unavailable ifvmagentruns with-promscrape.dropOriginalLabelscommand-line flag. The opened page shows step-by-step results for the actual relabeling rules, which result to target drop. -

Metric-level debugging (e.g.

metric_relabel_configssection at scrape_configs can be performed by navigating tohttp://vmagent:8429/targetspage (http://victoriametrics:8428/targetspage for single-node VictoriaMetrics) and clicking thedebug metrics relabelinglink at the target, which must be debugged. The link is unavailable ifvmagentruns with-promscrape.dropOriginalLabelscommand-line flag. The opened page shows step-by-step results for the actual metric relabeling rules applied to the given target labels.

See also debugging scrape targets.

Debugging scrape targets

vmagent and single-node VictoriaMetrics

provide the following tools for debugging scrape targets:

-

http://vmagent:8429/targetspage, which contains information about all the targets, which are scraped at the moment. This page helps answering the following questions:- Why some targets cannot be scraped? The

last errorcolumn contains the reason why the given target cannot be scraped. You can also click theendpointlink in order open the target url in your browser. You can also click theresponselink in order to open the target url on behalf ofvmagent. This may be helpful whenvmagentis located in some isolated network. - Which labels the particular target has? The

labelscolumn shows per-target labels. These labels are attached to all the metrics scraped from the given target. You can also click on the target labels in order to see the original labels of the target before applying the relabeling. The original labels are unavailable ifvmagentruns with-promscrape.dropOriginalLabelscommand-line flag. - Why the given target has the given set of labels? Click the

targetlink atdebug relabelingcolumn for the particular target in order to see step-by-step execution of target relabeling rules applied to the original labels. This link is unavailable ifvmagentruns with-promscrape.dropOriginalLabelscommand-line flag. - How the given metrics relabeling rules are applied to scraped metrics? Click the

metricslink atdebug relabelingcolumn for the particular target in order to see step-by-step execution of metric relabeling rules applied to the scraped metrics. - How many failed scrapes were for the particular target? The

errorscolumn shows this value. - How many metrics the given target exposes? The

samplescolumn shows the number of metrics scraped per each target during the last scrape. - How long does it take to scrape the given target? The

durationcolumn shows last scrape duration per each target. - When was the last scrape for the given target? The

last scrapecolumn shows the last time the given target was scraped. - How many times the given target was scraped? The

scrapescolumn shows this information. - What is the current state of the particular target? The

statecolumn shows the current state of the particular target.

- Why some targets cannot be scraped? The

-

http://vmagent:8429/service-discoverypage, which contains information about all the discovered targets. This page doesn't work ifvmagentruns with-promscrape.dropOriginalLabelscommand-line flag. This pages helps answering the following questions:- Why some targets are dropped during service discovery? Click

debuglink atdebug relabelingon the dropped target in order to see step-by-step execution of target relabeling rules applied to the original labels of discovered target. - Why some targets contain unexpected labels? Click

debuglink atdebug relabelingon the dropped target in order to see step-by-step execution of target relabeling rules applied to the original labels of discovered target. - What were the original labels before relabeling for a particular target? The

discovered labelscolumn contains the original labels per each discovered target.

- Why some targets are dropped during service discovery? Click

See also relabel debug.

Prometheus staleness markers

vmagent sends Prometheus staleness markers to -remoteWrite.url in the following cases:

- If they are passed to

vmagentvia Prometheus remote_write protocol. - If the metric disappears from the list of scraped metrics, then stale marker is sent to this particular metric.

- If the scrape target becomes temporarily unavailable, then stale markers are sent for all the metrics scraped from this target.

- If the scrape target is removed from the list of targets, then stale markers are sent for all the metrics scraped from this target.

Prometheus staleness markers' tracking needs additional memory, since it must store the previous response body per each scrape target in order to compare it to the current response body. The memory usage may be reduced by disabling staleness tracking in the following ways:

- By passing

-promscrape.noStaleMarkerscommand-line flag tovmagent. This disables staleness tracking across all the targets. - By specifying

no_stale_markers: trueoption in the scrape_config for the corresponding target.

When staleness tracking is disabled, then vmagent doesn't track the number of new time series per each scrape,

e.g. it sets scrape_series_added metric to zero. See these docs for details.

Stream parsing mode

By default, vmagent parses the full response from the scrape target, applies relabeling

and then pushes the resulting metrics to the configured -remoteWrite.url in one go. This mode works good for the majority of cases

when the scrape target exposes small number of metrics (e.g. less than 10K). But this mode may take big amounts of memory

when the scrape target exposes big number of metrics (for example, when vmagent scrapes kube-state-metrics

in large Kubernetes cluster). It is recommended enabling stream parsing mode for such targets.

When this mode is enabled, vmagent processes the response from the scrape target in chunks.

This allows saving memory when scraping targets that expose millions of metrics.

Stream parsing mode is automatically enabled for scrape targets returning response bodies with sizes bigger than

the -promscrape.minResponseSizeForStreamParse command-line flag value. Additionally,

stream parsing mode can be explicitly enabled in the following places:

- Via

-promscrape.streamParsecommand-line flag. In this case all the scrape targets defined in the file pointed by-promscrape.configare scraped in stream parsing mode. - Via

stream_parse: trueoption atscrape_configssection. In this case all the scrape targets defined in this section are scraped in stream parsing mode. - Via

__stream_parse__=truelabel, which can be set via relabeling atrelabel_configssection. In this case stream parsing mode is enabled for the corresponding scrape targets. Typical use case: to set the label via Kubernetes annotations for targets exposing big number of metrics.

Examples:

scrape_configs:

- job_name: 'big-federate'

stream_parse: true

static_configs:

- targets:

- big-prometheus1

- big-prometheus2

honor_labels: true

metrics_path: /federate

params:

'match[]': ['{__name__!=""}']

Note that vmagent in stream parsing mode stores up to sample_limit samples to the configured -remoteStorage.url

instead of dropping all the samples read from the target, because the parsed data is sent to the remote storage

as soon as it is parsed in stream parsing mode.

Scraping big number of targets

A single vmagent instance can scrape tens of thousands of scrape targets. Sometimes this isn't enough due to limitations on CPU, network, RAM, etc.

In this case scrape targets can be split among multiple vmagent instances (aka vmagent horizontal scaling, sharding and clustering).

The number of vmagent instances in the cluster must be passed to -promscrape.cluster.membersCount command-line flag.

Each vmagent instance in the cluster must use identical -promscrape.config files with distinct -promscrape.cluster.memberNum values

in the range 0 ... N-1, where N is the number of vmagent instances in the cluster specified via -promscrape.cluster.membersCount.

For example, the following commands spread scrape targets among a cluster of two vmagent instances:

/path/to/vmagent -promscrape.cluster.membersCount=2 -promscrape.cluster.memberNum=0 -promscrape.config=/path/to/config.yml ...

/path/to/vmagent -promscrape.cluster.membersCount=2 -promscrape.cluster.memberNum=1 -promscrape.config=/path/to/config.yml ...

The -promscrape.cluster.memberNum can be set to a StatefulSet pod name when vmagent runs in Kubernetes.

The pod name must end with a number in the range 0 ... promscrape.cluster.membersCount-1. For example, -promscrape.cluster.memberNum=vmagent-0.

By default, each scrape target is scraped only by a single vmagent instance in the cluster. If there is a need for replicating scrape targets among multiple vmagent instances,

then -promscrape.cluster.replicationFactor command-line flag must be set to the desired number of replicas. For example, the following commands

start a cluster of three vmagent instances, where each target is scraped by two vmagent instances:

/path/to/vmagent -promscrape.cluster.membersCount=3 -promscrape.cluster.replicationFactor=2 -promscrape.cluster.memberNum=0 -promscrape.config=/path/to/config.yml ...

/path/to/vmagent -promscrape.cluster.membersCount=3 -promscrape.cluster.replicationFactor=2 -promscrape.cluster.memberNum=1 -promscrape.config=/path/to/config.yml ...

/path/to/vmagent -promscrape.cluster.membersCount=3 -promscrape.cluster.replicationFactor=2 -promscrape.cluster.memberNum=2 -promscrape.config=/path/to/config.yml ...

Every vmagent in the cluster exposes all the discovered targets at http://vmagent:8429/service-discovery page.

Each discovered target on this page contains its status (UP, DOWN or DROPPED with the reason why the target has been dropped).

If the target is dropped because of sharding to other vmagent instances in the cluster, then the status column contains

-promscrape.cluster.memberNum values for vmagent instances where the given target is scraped.

The /service-discovery page provides links to the corresponding vmagent instances if -promscrape.cluster.memberURLTemplate command-line flag is set.

Every occurrence of %d inside the -promscrape.cluster.memberURLTemplate is substituted with the -promscrape.cluster.memberNum

for the corresponding vmagent instance. For example, -promscrape.cluster.memberURLTemplate='http://vmagent-instance-%d:8429/targets'

generates http://vmagent-instance-42:8429/targets url for vmagent instance, which runs with -promscrape.cluster.memberNum=42.

Note that vmagent shows up to -promscrape.maxDroppedTargets dropped targets on the /service-discovery page.

Increase the -promscrape.maxDroppedTargets command-line flag value if the /service-discovery page misses some dropped targets.

If each target is scraped by multiple vmagent instances, then data deduplication must be enabled at remote storage pointed by -remoteWrite.url.

The -dedup.minScrapeInterval must be set to the scrape_interval configured at -promscrape.config.

See these docs for details.

The -promscrape.cluster.memberLabel command-line flag allows specifying a name for member num label to add to all the scraped metrics.

The value of the member num label is set to -promscrape.cluster.memberNum. For example, the following config instructs adding vmagent_instance="0" label

to all the metrics scraped by the given vmagent instance:

/path/to/vmagent -promscrape.cluster.membersCount=2 -promscrape.cluster.memberNum=0 -promscrape.cluster.memberLabel=vmagent_instance

See also how to shard data among multiple remote storage systems.

High availability

It is possible to run multiple identically configured vmagent instances or vmagent

clusters, so they scrape

the same set of targets and push the collected data to the same set of VictoriaMetrics remote storage systems.

Two identically configured vmagent instances or clusters is usually called an HA pair.

When running HA pairs, deduplication must be configured at VictoriaMetrics side in order to de-duplicate received samples. See these docs for details.

It is also recommended passing different values to -promscrape.cluster.name command-line flag per each vmagent

instance or per each vmagent cluster in HA setup. This is needed for proper data de-duplication.

See this issue for details.

Scraping targets via a proxy

vmagent supports scraping targets via http, https and socks5 proxies. Proxy address must be specified in proxy_url option. For example, the following scrape config instructs

target scraping via https proxy at https://proxy-addr:1234:

scrape_configs:

- job_name: foo

proxy_url: https://proxy-addr:1234

Proxy can be configured with the following optional settings:

proxy_authorizationfor generic token authorization. See these docs.proxy_basic_authfor Basic authorization. See these docs.proxy_bearer_tokenandproxy_bearer_token_filefor Bearer token authorizationproxy_oauth2for OAuth2 config. See these docs.proxy_tls_configfor TLS config. See these docs.proxy_headersfor passing additional HTTP headers in requests to proxy.

For example:

scrape_configs:

- job_name: foo

proxy_url: https://proxy-addr:1234

proxy_basic_auth:

username: foobar

password: secret

proxy_tls_config:

insecure_skip_verify: true

cert_file: /path/to/cert

key_file: /path/to/key

ca_file: /path/to/ca

server_name: real-server-name

proxy_headers:

- "Proxy-Auth: top-secret"

Disabling on-disk persistence

By default vmagent stores pending data, which cannot be sent to the configured remote storage systems in a timely manner, in the folder set

by -remoteWrite.tmpDataPath command-line flag. By default vmagent writes all the pending data to this folder until this data is sent to the configured

-remoteWrite.url systems or until the folder becomes full. The maximum data size, which can be saved to -remoteWrite.tmpDataPath

per every configured -remoteWrite.url, can be limited via -remoteWrite.maxDiskUsagePerURL command-line flag.

When this limit is reached, vmagent drops the oldest data from disk in order to save newly ingested data.

There are cases when it is better disabling on-disk persistence for pending data at vmagent side:

- When the persistent disk performance isn't enough for the given data processing rate.

- When it is better to buffer pending data at the client side instead of bufferring it at

vmagentside in the-remoteWrite.tmpDataPathfolder. - When the data is already buffered at Kafka side or at Google PubSub side.

- When it is better to drop pending data instead of buffering it.

In this case -remoteWrite.disableOnDiskQueue command-line flag can be passed to vmagent per each configured -remoteWrite.url.

vmagent works in the following way if the corresponding remote storage system at -remoteWrite.url cannot keep up with the data ingestion rate

and the -remoteWrite.disableOnDiskQueue command-line flag is set:

- It returns

429 Too Many RequestsHTTP error to clients, which send data tovmagentvia supported HTTP endpoints. If-remoteWrite.dropSamplesOnOverloadcommand-line flag is set or if multiple-remoteWrite.disableOnDiskQueuecommand-line flags are set for different-remoteWrite.urloptions, then the ingested samples are silently dropped instead of returning the error to clients. - It suspends consuming data from Kafka side or Google PubSub side until the remote storage becomes available.

If

-remoteWrite.dropSamplesOnOverloadcommand-line flag is set or if multiple-remoteWrite.disableOnDiskQueuecommand-line flags are set for different-remoteWrite.urloptions, then the fetched samples are silently dropped instead of suspending data consumption from Kafka or Google PubSub. - It drops samples pushed to

vmagentvia non-HTTP protocols and logs the error. Pass-remoteWrite.dropSamplesOnOverloadcommand-line flag in order to suppress error messages in this case. - It drops samples scraped from Prometheus-compatible targets, because it is better from operations perspective to drop samples instead of blocking the scrape process.

- It drops stream aggregation output samples, because it is better from operations perspective to drop output samples instead of blocking the stream aggregation process.

The number of dropped samples because of overloaded remote storage can be monitored via vmagent_remotewrite_samples_dropped_total metric.

The number of unsuccessful attempts to send data to overloaded remote storage can be monitored via vmagent_remotewrite_push_failures_total metric.

Running vmagent on hosts with more RAM or increasing the value for -memory.allowedPercent may reduce the number of unsuccessful attempts or dropped samples

on spiky workloads, since vmagent may buffer more data in memory before returning the error or dropping data.

vmagent still may write pending in-memory data to -remoteWrite.tmpDataPath on graceful shutdown

if -remoteWrite.disableOnDiskQueue command-line flag is specified. It may also read buffered data from -remoteWrite.tmpDataPath

on startup.

When -remoteWrite.disableOnDiskQueue command-line flag is set, vmagent may send the same samples multiple times to the configured remote storage

if it cannot keep up with the data ingestion rate. In this case the deduplication

must be enabled on all the configured remote storage systems.

Cardinality limiter

By default, vmagent doesn't limit the number of time series each scrape target can expose.

The limit can be enforced in the following places:

- Via

-promscrape.seriesLimitPerTargetcommand-line flag. This limit is applied individually to all the scrape targets defined in the file pointed by-promscrape.config. - Via

series_limitconfig option at scrape_config section. Theseries_limitallows overriding the-promscrape.seriesLimitPerTargeton a per-scrape_configbasis. Ifseries_limitis set to0or to negative value, then it isn't applied to the givenscrape_config, even if-promscrape.seriesLimitPerTargetcommand-line flag is set. - Via

__series_limit__label, which can be set with relabeling atrelabel_configssection. The__series_limit__allows overriding theseries_limiton a per-target basis. If__series_limit__is set to0or to negative value, then it isn't applied to the given target. Typical use case: to set the limit via Kubernetes annotations for targets, which may expose too high number of time series.

Scraped metrics are dropped for time series exceeding the given limit on the time window of 24h.

vmagent creates the following additional per-target metrics for targets with non-zero series limit:

scrape_series_limit_samples_dropped- the number of dropped samples during the scrape when the unique series limit is exceeded.scrape_series_limit- the series limit for the given target.scrape_series_current- the current number of series for the given target.

These metrics are automatically sent to the configured -remoteWrite.url alongside with the scraped per-target metrics.

These metrics allow building the following alerting rules:

scrape_series_current / scrape_series_limit > 0.9- alerts when the number of series exposed by the target reaches 90% of the limit.sum_over_time(scrape_series_limit_samples_dropped[1h]) > 0- alerts when some samples are dropped because the series limit on a particular target is reached.

See also sample_limit option at scrape_config section.

By default, vmagent doesn't limit the number of time series written to remote storage systems specified at -remoteWrite.url.

The limit can be enforced by setting the following command-line flags:

-remoteWrite.maxHourlySeries- limits the number of unique time seriesvmagentcan write to remote storage systems during the last hour. Useful for limiting the number of active time series.-remoteWrite.maxDailySeries- limits the number of unique time seriesvmagentcan write to remote storage systems during the last day. Useful for limiting daily churn rate.

Both limits can be set simultaneously. If any of these limits is reached, then samples for new time series are dropped instead of sending

them to remote storage systems. A sample of dropped series is put in the log with WARNING level.

vmagent exposes the following metrics at http://vmagent:8429/metrics page (see monitoring docs for details):

vmagent_hourly_series_limit_rows_dropped_total- the number of metrics dropped due to exceeded hourly limit on the number of unique time series.vmagent_hourly_series_limit_max_series- the hourly series limit set via-remoteWrite.maxHourlySeries.vmagent_hourly_series_limit_current_series- the current number of unique series registered during the last hour.vmagent_daily_series_limit_rows_dropped_total- the number of metrics dropped due to exceeded daily limit on the number of unique time series.vmagent_daily_series_limit_max_series- the daily series limit set via-remoteWrite.maxDailySeries.vmagent_daily_series_limit_current_series- the current number of unique series registered during the last day.

These limits are approximate, so vmagent can underflow/overflow the limit by a small percentage (usually less than 1%).

See also cardinality explorer docs.

Monitoring

vmagent exports various metrics in Prometheus exposition format at http://vmagent-host:8429/metrics page.

We recommend setting up regular scraping of this page either through vmagent itself or by Prometheus-compatible scraper,

so that the exported metrics may be analyzed later.

If you use Google Cloud Managed Prometheus for scraping metrics from VictoriaMetrics components, then pass -metrics.exposeMetadata

command-line to them, so they add TYPE and HELP comments per each exposed metric at /metrics page.

See these docs for details.

Use official Grafana dashboard for vmagent state overview.

Graphs on this dashboard contain useful hints - hover the i icon at the top left corner of each graph in order to read it.

If you have suggestions for improvements or have found a bug - please open an issue on github or add a review to the dashboard.

vmagent also exports the status for various targets at the following pages:

http://vmagent-host:8429/targets. This pages shows the current status for every active target.http://vmagent-host:8429/service-discovery. This pages shows the list of discovered targets with the discovered__meta_*labels according to these docs. This page may help debugging target relabeling.http://vmagent-host:8429/api/v1/targets. This handler returns JSON response compatible with the corresponding page from Prometheus API.http://vmagent-host:8429/ready. This handler returns http 200 status code whenvmagentfinishes its initialization for all the service_discovery configs. It may be useful to performvmagentrolling update without any scrape loss.

Troubleshooting

-

It is recommended setting up the official Grafana dashboard in order to monitor the state of `vmagent'.

-

It is recommended increasing the maximum number of open files in the system (

ulimit -n) when scraping a big number of targets, asvmagentestablishes at least a single TCP connection per target. -

If

vmagentuses too big amounts of memory, then the following options can help:- Reducing the amounts of RAM vmagent can use for in-memory buffering with

-memory.allowedPercentor-memory.allowedBytescommand-line flag. Another option is to reduce memory limits in Docker and/or Kubernetes ifvmagentruns under these systems. - Reducing the number of CPU cores vmagent can use by passing

GOMAXPROCS=Nenvironment variable tovmagent, whereNis the desired limit on CPU cores. Another option is to reduce CPU limits in Docker or Kubernetes ifvmagentruns under these systems. - Disabling staleness tracking with

-promscrape.noStaleMarkersoption. See these docs. - Enabling stream parsing mode if

vmagentscrapes targets with millions of metrics per target. See these docs. - Reducing the number of tcp connections to remote storage systems with

-remoteWrite.queuescommand-line flag. - Passing

-promscrape.dropOriginalLabelscommand-line flag tovmagentif it discovers big number of targets and many of these targets are dropped before scraping. In this casevmagentdrops"discoveredLabels"and"droppedTargets"lists athttp://vmagent-host:8429/service-discoverypage. This reduces memory usage when scraping big number of targets at the cost of reduced debuggability for improperly configured per-target relabeling.

- Reducing the amounts of RAM vmagent can use for in-memory buffering with

-

When

vmagentscrapes many unreliable targets, it can flood the error log with scrape errors. It is recommended investigating and fixing these errors. If it is unfeasible to fix all the reported errors, then they can be suppressed by passing-promscrape.suppressScrapeErrorscommand-line flag tovmagent. The most recent scrape error per each target can be observed athttp://vmagent-host:8429/targetsandhttp://vmagent-host:8429/api/v1/targets. -

The

http://vmagent-host:8429/service-discoverypage could be useful for debugging relabeling process for scrape targets. This page contains original labels for targets dropped during relabeling. By default, the-promscrape.maxDroppedTargetstargets are shown here. If your setup drops more targets during relabeling, then increase-promscrape.maxDroppedTargetscommand-line flag value to see all the dropped targets. Note that tracking each dropped target requires up to 10Kb of RAM. Therefore, big values for-promscrape.maxDroppedTargetsmay result in increased memory usage if a big number of scrape targets are dropped during relabeling. -

It is recommended increaseing

-remoteWrite.queuesifvmagent_remotewrite_pending_data_bytesmetric grows constantly. It is also recommended increasing-remoteWrite.maxBlockSizeand-remoteWrite.maxRowsPerBlockcommand-line flags in this case. This can improve data ingestion performance to the configured remote storage systems at the cost of higher memory usage. -

If you see gaps in the data pushed by

vmagentto remote storage when-remoteWrite.maxDiskUsagePerURLis set, try increasing-remoteWrite.queues. Such gaps may appear becausevmagentcannot keep up with sending the collected data to remote storage. Therefore, it starts dropping the buffered data if the on-disk buffer size exceeds-remoteWrite.maxDiskUsagePerURL. -

vmagentdrops data blocks if remote storage replies with400 Bad Requestand409 ConflictHTTP responses. The number of dropped blocks can be monitored viavmagent_remotewrite_packets_dropped_totalmetric exported at /metrics page. -

Use

-remoteWrite.queues=1when-remoteWrite.urlpoints to remote storage, which doesn't accept out-of-order samples (aka data backfilling). Such storage systems include Prometheus, Mimir, Cortex and Thanos, which typically emitout of order sampleerrors. The best solution is to use remote storage with backfilling support such as VictoriaMetrics. -

vmagentbuffers scraped data at the-remoteWrite.tmpDataPathdirectory until it is sent to-remoteWrite.url. The directory can grow large when remote storage is unavailable for extended periods of time and if the maximum directory size isn't limited with-remoteWrite.maxDiskUsagePerURLcommand-line flag. If you don't want to send all the buffered data from the directory to remote storage then simply stopvmagentand delete the directory. -

If

vmagentruns on a host with slow persistent storage, which cannot keep up with the volume of processed samples, then it is possible to disable the persistent storage with-remoteWrite.disableOnDiskQueuecommand-line flag. See these docs for more details. -

By default

vmagentmasks-remoteWrite.urlwithsecret-urlvalues in logs and at/metricspage because the url may contain sensitive information such as auth tokens or passwords. Pass-remoteWrite.showURLcommand-line flag when startingvmagentin order to see all the valid urls. -

By default

vmagentevenly spreads scrape load in time. If a particular scrape target must be scraped at the beginning of some interval, thenscrape_align_intervaloption must be used. For example, the following config aligns hourly scrapes to the beginning of hour:scrape_configs: - job_name: foo scrape_interval: 1h scrape_align_interval: 1h -

By default

vmagentevenly spreads scrape load in time. If a particular scrape target must be scraped at specific offset, thenscrape_offsetoption must be used. For example, the following config instructsvmagentto scrape the target at 10 seconds of every minute:scrape_configs: - job_name: foo scrape_interval: 1m scrape_offset: 10s -

If you see

skipping duplicate scrape target with identical labelserrors when scraping Kubernetes pods, then it is likely these pods listen to multiple ports or they use an init container. These errors can either be fixed or suppressed with the-promscrape.suppressDuplicateScrapeTargetErrorscommand-line flag. See the available options below if you prefer fixing the root cause of the error:The following relabeling rule may be added to

relabel_configssection in order to filter out pods with unneeded ports:- action: keep_if_equal source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_port, __meta_kubernetes_pod_container_port_number]The following relabeling rule may be added to

relabel_configssection in order to filter out init container pods:- action: drop source_labels: [__meta_kubernetes_pod_container_init] regex: true

See also:

Calculating disk space for persistence queue

vmagent buffers collected metrics on disk at the directory specified via -remoteWrite.tmpDataPath command-line flag

until the metrics are sent to remote storage configured via -remoteWrite.url command-line flag.

The -remoteWrite.tmpDataPath directory can grow large when remote storage is unavailable for extended

periods of time and if the maximum directory size isn't limited with -remoteWrite.maxDiskUsagePerURL command-line flag.

To estimate the allocated disk size for persistent queue, or to estimate -remoteWrite.maxDiskUsagePerURL command-line flag value,

take into account the following attributes:

-

The size in bytes of data stream sent by vmagent:

Run the query

sum(rate(vmagent_remotewrite_bytes_sent_total[1h])) by(instance,url)in vmui or Grafana to get the amount of bytes sent by each vmagent instance per second. -

The amount of time a persistent queue should keep the data before starting to drop it.

For example, if

vmagentshould be able to buffer the data for at least 6 hours, then the following query can be used for estimating the needed amounts of disk space in gigabytes:sum(rate(vmagent_remotewrite_bytes_sent_total[1h])) by(instance,url) * 6h / 1Gi

Additional notes:

- Ensure that

vmagentmonitoring is configured properly. - Re-evaluate the estimation each time when:

- there is an increase in the vmagent's workload

- there is a change in relabeling rules which could increase the amount metrics to send

- there is a change in number of configured

-remoteWrite.urladdresses

- The minimum disk size to allocate for the persistent queue is 500Mi per each

-remoteWrite.url. - On-disk persistent queue can be disabled if needed. See these docs.

Google PubSub integration

Enterprise version of vmagent can read and write metrics from / to Google PubSub:

Reading metrics from PubSub

Enterprise version of vmagent can read metrics in various formats from Google PubSub messages.

-gcp.pubsub.subscribe.defaultMessageFormat and -gcp.pubsub.subscribe.topicSubscription.messageFormat command-line flags allow configuring the needed message format.

The following message formats are supported:

promremotewrite- Prometheus remote_write. Messages in this format can be sent by vmagent - see these docs.influx- InfluxDB line protocol format.prometheus- Prometheus text exposition format and OpenMetrics format.graphite- Graphite plaintext format.jsonline- JSON line format.

Every PubSub message may contain multiple lines in influx, prometheus, graphite and jsonline format delimited by \n.

vmagent consumes messages from PubSub topic subscriptions specified by -gcp.pubsub.subscribe.topicSubscription command-line flag.

Multiple topics can be specified by passing multiple -gcp.pubsub.subscribe.topicSubscription command-line flags to vmagent.

vmagent uses standard Google authorization mechanism for topic access. It's possible to specify credentials directly via -gcp.pubsub.subscribe.credentialsFile command-line flag.

For example, the following command starts vmagent, which reads metrics in InfluxDB line protocol format

from PubSub projects/victoriametrics-vmagent-pub-sub-test/subscriptions/telegraf-testing and sends them to remote storage at http://localhost:8428/api/v1/write:

./bin/vmagent -remoteWrite.url=http://localhost:8428/api/v1/write \

-gcp.pubsub.subscribe.topicSubscription=projects/victoriametrics-vmagent-pub-sub-test/subscriptions/telegraf-testing \

-gcp.pubsub.subscribe.topicSubscription.messageFormat=influx

It is expected that Telegraf sends metrics to the telegraf-testing topic at the victoriametrics-vmagent-pub-sub-test project

with the following config:

[[outputs.cloud_pubsub]]

project = "victoriametrics-vmagent-pub-sub-test"

topic = "telegraf-testing"

data_format = "influx"

vmagent buffers messages read from Google PubSub topic on local disk if the remote storage at -remoteWrite.url cannot keep up with the data ingestion rate.

In this case it may be useful to disable on-disk data persistence in order to prevent from unbounded growth of the on-disk queue.

See these docs.

See also how to write metrics to multiple distinct tenants.

Consume metrics from multiple topics

vmagent can read messages from different topics in different formats. For example, the following command starts vmagent, which reads plaintext

Influx messages from telegraf-testing topic

and gzipped JSON line messages from json-line-testing topic:

./bin/vmagent -remoteWrite.url=http://localhost:8428/api/v1/write \

-gcp.pubsub.subscribe.topicSubscription=projects/victoriametrics-vmagent-pub-sub-test/subscriptions/telegraf-testing \

-gcp.pubsub.subscribe.topicSubscription.messageFormat=influx \

-gcp.pubsub.subscribe.topicSubscription.isGzipped=false \

-gcp.pubsub.subscribe.topicSubscription=projects/victoriametrics-vmagent-pub-sub-test/subscriptions/json-line-testing \

-gcp.pubsub.subscribe.topicSubscription.messageFormat=jsonline \

-gcp.pubsub.subscribe.topicSubscription.isGzipped=true

Command-line flags for PubSub consumer

These command-line flags are available only in enterprise version of vmagent,

which can be downloaded for evaluation from releases page

(see vmutils-...-enterprise.tar.gz archives) and from docker images with tags containing enterprise suffix.

-gcp.pubsub.subscribe.credentialsFile string

Path to file with GCP credentials to use for PubSub client. If not set, default credentials are used (see Workload Identity for K8S or https://cloud.google.com/docs/authentication/application-default-credentials ). See https://docs.victoriametrics.com/vmagent/#reading-metrics-from-pubsub . This flag is available only in Enterprise binaries. See https://docs.victoriametrics.com/enterprise/

-gcp.pubsub.subscribe.defaultMessageFormat string

Default message format if -gcp.pubsub.subscribe.topicSubscription.messageFormat is missing. See https://docs.victoriametrics.com/vmagent/#reading-metrics-from-pubsub . This flag is available only in Enterprise binaries. See https://docs.victoriametrics.com/enterprise/ (default "promremotewrite")

-gcp.pubsub.subscribe.topicSubscription array

GCP PubSub topic subscription in the format: projects/<project-id>/subscriptions/<subscription-name>. See https://docs.victoriametrics.com/vmagent/#reading-metrics-from-pubsub . This flag is available only in Enterprise binaries. See https://docs.victoriametrics.com/enterprise/

Supports an array of values separated by comma or specified via multiple flags.

-gcp.pubsub.subscribe.topicSubscription.concurrency array

The number of concurrently processed messages for topic subscription specified via -gcp.pubsub.subscribe.topicSubscription flag. See https://docs.victoriametrics.com/vmagent/#reading-metrics-from-pubsub . This flag is available only in Enterprise binaries. See https://docs.victoriametrics.com/enterprise/ (default 0)

Supports array of values separated by comma or specified via multiple flags.

-gcp.pubsub.subscribe.topicSubscription.isGzipped array

Enables gzip decompression for messages payload at the corresponding -gcp.pubsub.subscribe.topicSubscription. Only prometheus, jsonline, graphite and influx formats accept gzipped messages. See https://docs.victoriametrics.com/vmagent/#reading-metrics-from-pubsub . This flag is available only in Enterprise binaries. See https://docs.victoriametrics.com/enterprise/

Supports array of values separated by comma or specified via multiple flags.

-gcp.pubsub.subscribe.topicSubscription.messageFormat array

Message format for the corresponding -gcp.pubsub.subscribe.topicSubscription. Valid formats: influx, prometheus, promremotewrite, graphite, jsonline . See https://docs.victoriametrics.com/vmagent/#reading-metrics-from-pubsub . This flag is available only in Enterprise binaries. See https://docs.victoriametrics.com/enterprise/

Supports an array of values separated by comma or specified via multiple flags.

Writing metrics to PubSub

Enterprise version of vmagent writes data into Google PubSub if -remoteWrite.url command-line flag starts with pubsub: prefix.